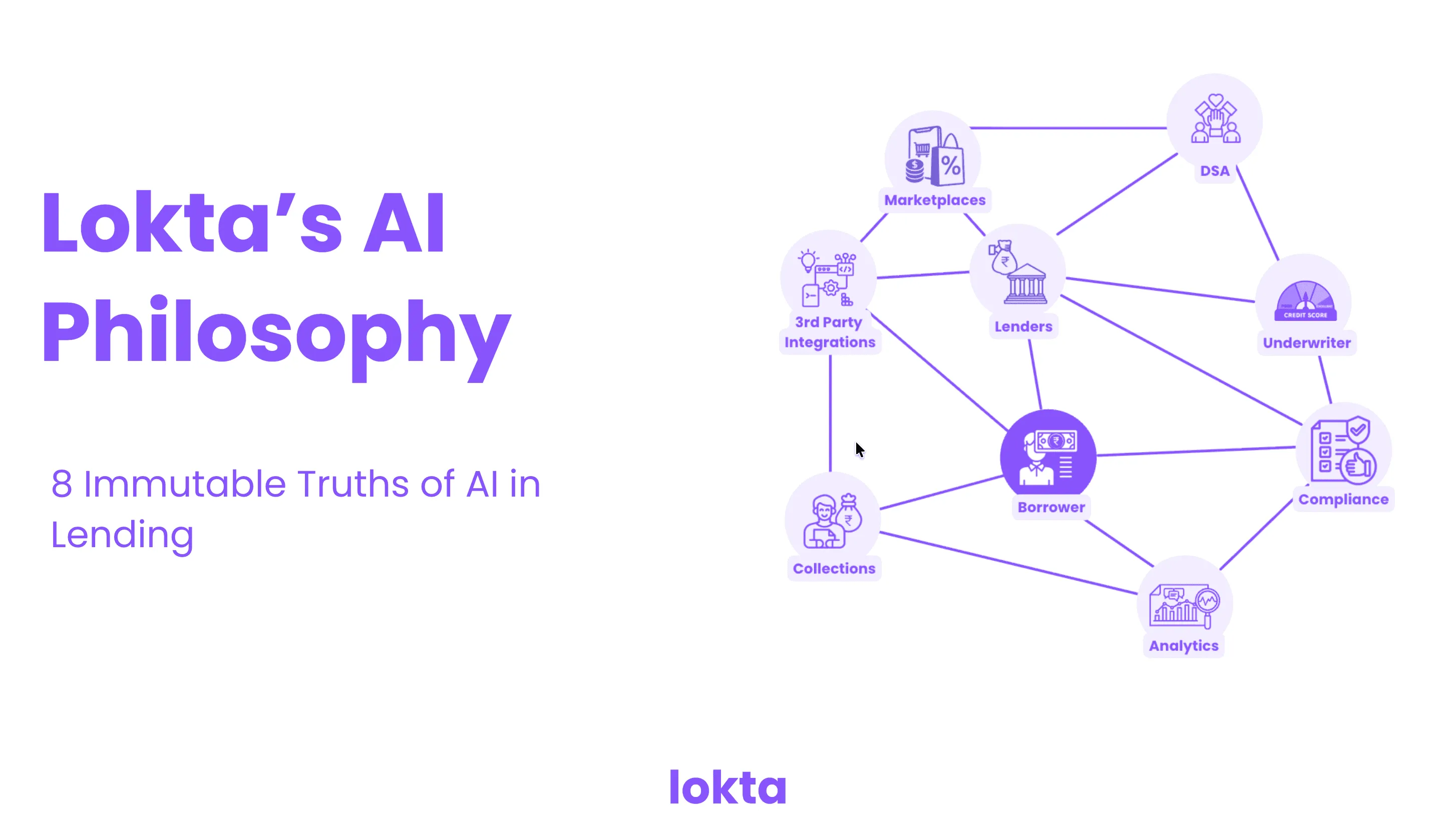

Lokta's Philosophy for Auditable AI in Lending

Eight truths that define how Lokta uses AI in lending — deterministic core, evidence-grounded outputs, human-controlled decisions, built-in auditability.

AI in lending is not an experiment any more. The question is no longer whether to use it — it is how to use it without breaking the things lending cannot afford to break: trust, compliance, and the audit trail that regulators and your own board will ask to see.

We have spent the last two decades building lending systems and the last two years thinking about exactly this question. Lokta’s approach to AI in lending comes down to eight immutable truths — the rules we hold ourselves to, and the rules we believe any responsible AI-in-lending platform must hold itself to.

1. Deterministic core, AI as an intelligence layer

Core financial operations — money movement, schedules, regulatory records, state transitions — must remain deterministic and replayable. AI sits above this core as an intelligence layer. Its role is to reason, orchestrate, summarise, and recommend. It never runs the loan book itself.

2. No black-box decisions

AI at Lokta is explicitly not allowed to make regulated, core decisions on its own. Three categories stay under human accountability:

- Approving or rejecting loans.

- Setting prices or waiving fees.

- Triggering legal escalations or overriding controls.

These decisions are governed by what we internally call the Regulated Formula: Rules + Human Accountability + Approval Matrix.

3. No client data for training

We design AI workflows, not custom-trained ML models. The architecture relies on:

- Retrieval-Augmented Generation (RAG).

- Prompting and agent tools.

- Knowledge graphs and deterministic simulations.

This means we do not need to use sensitive client data for model training. The privacy posture is structural, not promised.

4. Grounded by evidence and policy

Every AI output is provably connected to verifiable facts. Outputs include citations and constraints, so a human can verify and an auditor can replay the decision process. Recommendations are anchored to two pillars:

- Evidence — documents, bureau data, system events, with full provenance.

- Policy — versioned rules and SOP clauses.

5. Assistive and advisory by design

AI assists. It does not commit. Its functions are bounded:

- Drafting — credit memos, narratives, scripts.

- Explaining — risk drivers and reconciliations.

- Suggesting — scenarios, playbooks, mappings.

- Triage — root cause analysis, identifying missing documents.

Final decisions and commitments are always explicit, approved user actions.

6. Auditability as architecture

Auditability is a first-class artifact of the system, not a report you generate later. Every interaction with AI is logged, and “Explain Packs” are produced on demand — capturing the prompt version, context sources, tool calls, final output, user edits, and approvals. From outcome back to evidence, the trail is complete.

7. Safe iteration with governance

Changes to AI logic ship with controlled rollouts and explicit governance. Prompts and agents are versioned. Every change is gated by:

- Deterministic test cases.

- Real-case evaluation suites.

- PII redaction and consent rules.

8. Human agency stays central

AI reduces operational burden and increases clarity. It does not replace accountability. The loan book belongs to underwriters, compliance officers, finance controllers, and operations leads — and the platform is built so they can see why a recommendation was made, review the sources, and fully own the final decision.

That is the philosophy. The eight rules are non-negotiable. Everything else we ship — workflows, agents, integrations — is built to honour them.